Mapping Testing Story (My article in The Testing Planet)

Publication URL: http://www.thetestingplanet.com/2011/11/november-2011-issue-6/

Download PDF: The Testing Planet November Issue

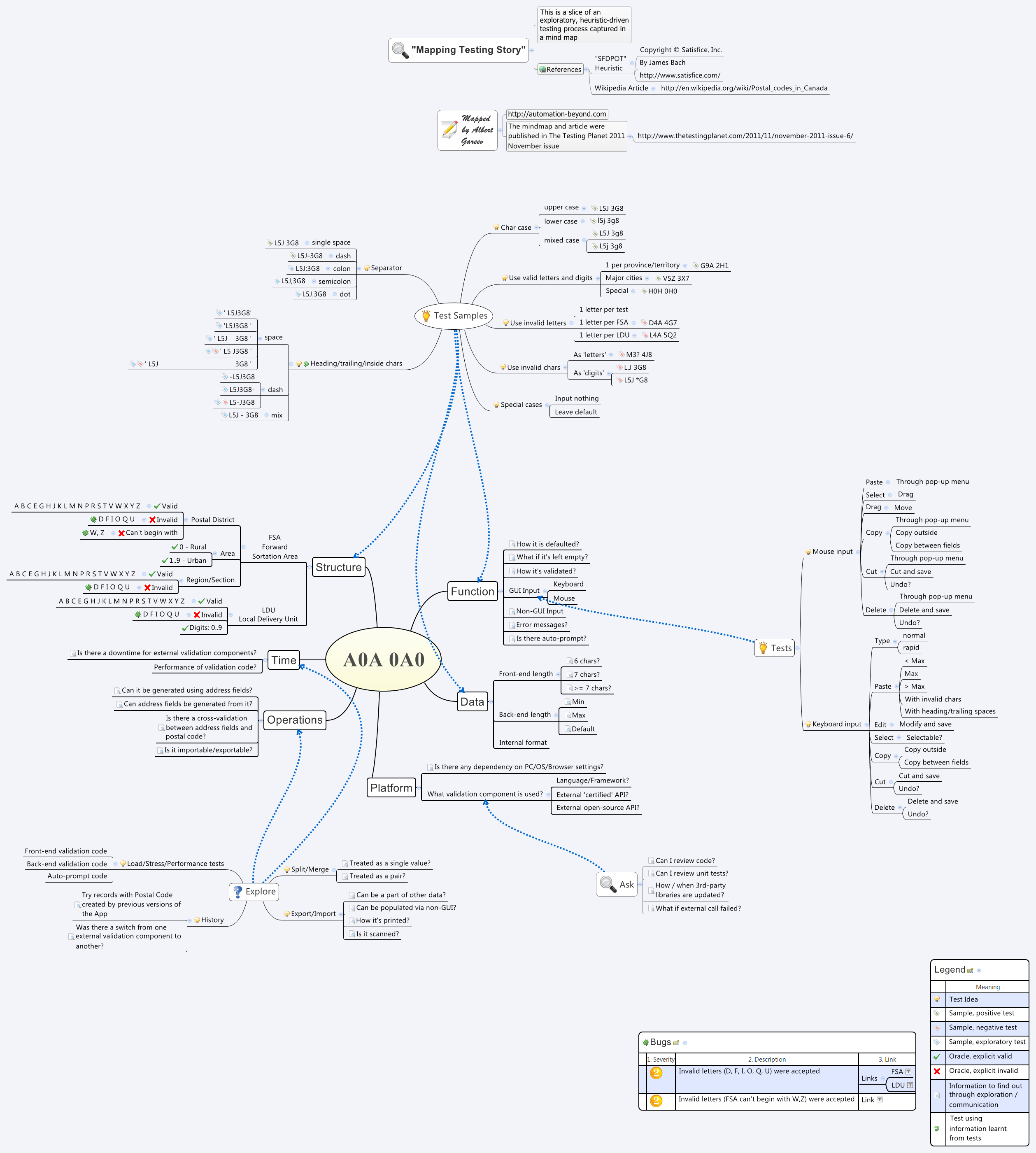

And you can view the large mind map here (click to open in a separate window).

Update: 2017.

Since the original website (thetestingplanet.com) is no longer available, providing the full text here.

******

Mapping Testing Story

I am a follower and a strong proponent of exploratory, context- and heuristic-driven testing approach. From here, I could go on a few pages telling how much I found it powerful, flexible, enjoyable, challenging, learning, and so forth, but instead I’m willing to share a story of a very special challenge about exploratory testing: a challenge introducing it to someone used only to a scripted approach, and a challenge of documenting a dynamic, non-linear exploratory test.

The story is based on my real experience; however, all context specifics was removed or replaced with fictional.

How would you teach someone to ride a bicycle, someone, who never tried it before, without giving a bicycle to practice? Sure, you can tell about what moves to make, even tell of a theory of static and dynamic balance. Moreover, you can even give a ride to that person, but it still won’t help building the skill of riding a bicycle.

Demonstrating exploratory testing is no different from demonstrating a ride on a bicycle. People can observe an external part: mouse moves, keystrokes being typed, navigation actions, - seeming to be quite random, ad-hoc, “without a purpose”. They can’t see what’s happening in your mind, can’t see test ideas sparking in your head, and models being structured and populated while you’re learning about the application. Written notes may partially help, and it’s helpful to comment your actions while you do. And yet creating a ‘user guide’ is nearly impossible, as it’ll be about fixed assumptions and pre-scripted steps.

What I found, though, that the structure of a mindmap can help capturing a ‘slice’ of a dynamic exploratory testing process: it allows keeping a non-linear structure, it’s rich, and it can always be expanded further.

The story began with a ‘simple’ test case..

..One morning I asked Marge, a tester in my team, were there any problems with address form in the application, with the postal code in particular. “No”, - she said. - “There were two test cases for the postal code, both passed”. Intrigued, I asked what test cases those were, and did she think of some other tests? “There was one for valid and one for invalid, and, I think, that’s it”, - Marge replied. “What if the application rejects some invalid characters and misses others?”, - I asked. And then asked some more test ideas just off top my head:

- Does it accept both upper- and lower-case?

- Must space character always be used as a separator, and are there any other allowed separator characters?

- Does the application verify if it’s an existing postal code?

- Does the application validate postal code against the other address fields entered?

Marge looked confused, so I came to the help. “These are the questions about the actual behavior of the application. Most of these questions are not reflected in the requirements to an extent of explicit ‘expected result’, but we can find it out through testing. Without requirements, we might be unsure whether the behavior is ‘right’ or ‘wrong’, but, to begin with, we need to find out what that behavior is. Based on our findings, we may assess what and how might be impacted by a particular behavior, and come up with next series of tests.”

“First, let’s write down what we know and what we want to know about postal code, in general, and with regards to our application. To guide our investigation, we’ll use Structure, Function, Data, Platform, Operation, and Time categories. Those make ‘SFDPOT - San-Francisco Depot’, a mnemonic and testing heuristic created by James Bach.”

Structure

What is a Canadian postal code, really? - Google search is a quite handy ‘testing tool’ for such investigations, and the first match comes as an article from Wikipedia. There it is - we’ve learnt about FSA (Forward Sortation Area) and LDU (Local Delivery Unit). By the way, not all letters can be used - “D, F, I, O, Q, and U” are invalid! Going back to the application, a quick test - and voilà - the postal code with invalid letters is accepted as valid! Let’s put down a note about this bug, and let’s make a note to try using all invalid letters, one per test, and move on. Actually, “W and Z” can’t be used as a beginning character, so a couple of more tests to try. And, of course, we’ve got plenty of valid combinations to use, especially with the link to an online postal code look up and validator tool, provided by Canada Post. By the way, do we have similar validation functionality in our app?

Functions

What functions are connected to the Postal Code field in the GUI, and at the back-end? Checking source of the web page reveals that there is some front-end validation JavaScript, which aims for invalid format. Note: we can unit test it, and we can ask programmers if they unit test the validation code. Validation may trigger an ‘error message’ response, so let’s put a note to find out if the application is supposed to recognize different kinds of invalid postal code, and what and how it reports for those cases. On the go, we mark ‘default’ and ‘reset’ micro-functionalities for the further exploration.

Certain time we spend trying the input functionalities – type, paste, select, cut, copy, using both keyboard and mouse for input.

Data

We’ve learnt a lot already about the data we use to represent postal code: it’s a single line, it has a certain structure, but it might be left empty as well. What we’d like to know is how the data field is defined at the back-end, in database’s table (or tables), and how it is handled by the application in memory. What type? Length? As a single value? As a pair? Can it be a part of another field?

By the way, we need to learn more about the length. Is it six characters? Seven with a separator, or even more? It turns out, that seven and more. What do we do with that? Let’s find out what can be a separator. Is there anything else but space character? Okay, dash is accepted. But only single dash. However, space-dash-space combination is still accepted. At this point, seems like we learnt something new about the functionality, so let’s go back to see how all these different combinations with separator characters are stored and retrieved.

Functions

As we’ve figured with a few tests, the application is ‘smart’ enough to get rid of some meaningless characters, used as separators, or trailing/heading parts of the postal code. It also brings data to the uppercase, regardless of input. Let’s see now, what purpose all these functions serve.

All these tests with data manipulation raise questions about different ways to receive the postal code. Is it only a GUI input? Can it be imported? (And exported as well). Through a file (What about format of data in the file)? Through a message (is there validation of data record)? Scanned, maybe? Overall, in which operations it’s used?

Operations

- Is it used as a reference data only or used actually in some automatic mailing procedures?

- Is it used to validate other fields or other fields used to validate postal code?

- Is it collected for some statistical analysis?

- Is it automatically or manually exportable to other applications?

Note, that answers to the questions above we need to obtain through communication with the project stakeholders. Once we know more details, we can decide on what we want to explore further. Some of our findings will reveal what operations and functionalities depend upon.

Platform

Certain ways the data displayed and operated are dependent on operating system’s settings, or configuration of the application itself. Let’s put a note that we need to find out about those dependencies.

From a quick chat with the programmer we knew that the front-end validation JavaScript was taken from some open-source example, and the back-end validation code consists of some in-house built functions and functions that are part of the framework. Put a note: we must find out how carefully the code unit tested, do programmers run automated checking routines, and on what occasions they switch the validation code to new versions or another libraries. While it’s out of our control, being aware of that will help us to plan our testing effort.

By the way, how much the application relies on those external libraries? What will it do if some libraries were not installed, or an older version of the framework was installed? What if the external API call is failed? Is it considered as a passed or failed validation? Lots of questions to ask, let’s keep them.

Time

Although we’re exploring only a bit of the product, there might be timing issues associated with it.

First of all, we’d like to find out was there a change in format, data validation rules, and were there any backward compatibility issues.

Second, we have a question (in fact, many questions) about performance of those validation calls, especially external, in various conditions of connectivity and under load.

Epilogue

..Over an hour is passed. We have learnt a lot about the application, we have found a few bugs, we’ve raised even more questions. Marge looks overwhelmed: “So much to test! And it’s only a single postal code field. And I have no idea how to test, for example, for performance issues”.

Yes, that is true. We can’t even think of all possible tests, and we for sure don’t have time for all of them to try. We may not have skills for some tests, and we don’t have facilities for other. But as long as we are aware of them we can and should make our project stakeholders aware, especially aware of those tests that have a feasible chance of revealing major threats to value of the product.

And to tell our testing story, the story of what questions were asked and answered, what was explored and found, we can use a mind map.