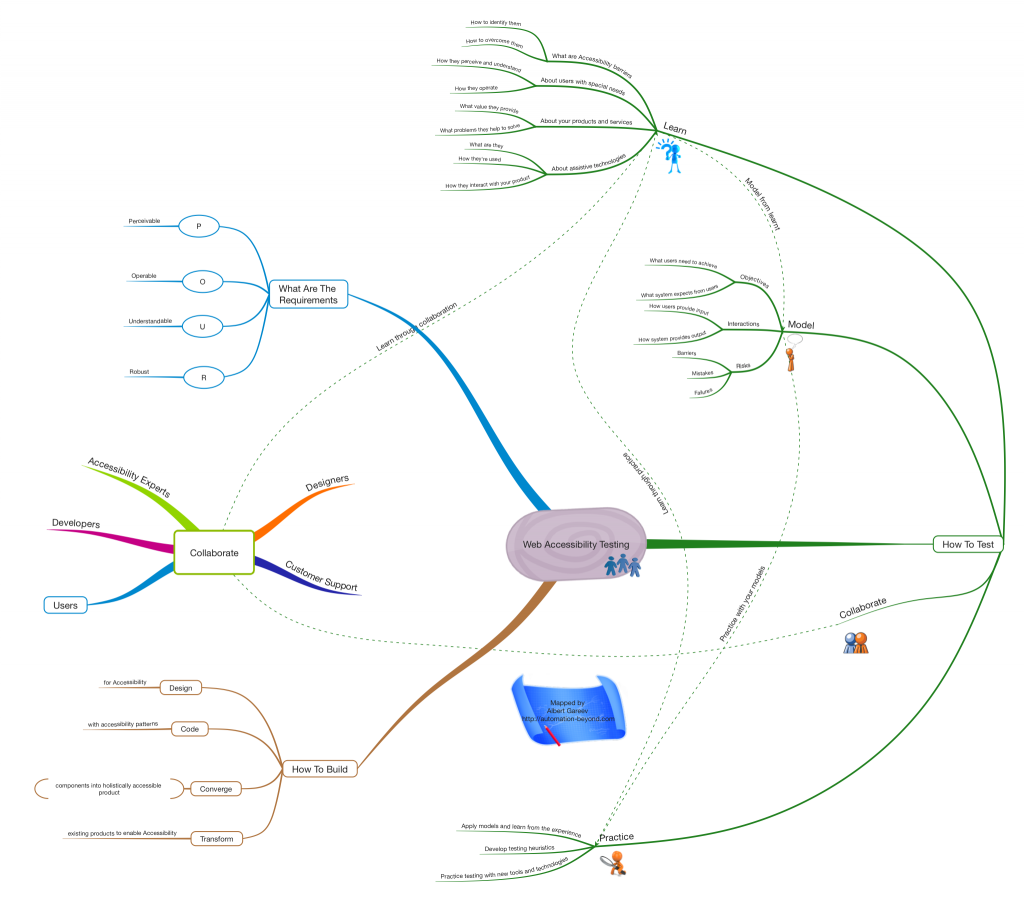

Web Accessibility Testing: Strategy and Mindmap

This is a cross-post of my publication on LinkedIn. I also provide more elaborate mindmap here.

Foreword

In the recent, I’ve been quite extensively writing about Web Accessibility in my blog. In part, because it’s one of my professional interests now. But also because I learnt how much it’s important and needed.

In Ontario, Accessibility is the hot topic because of the law and compliance deadlines that are coming up. Businesses are struggling in attempts to meet the compliance requirements both “in the letter and in the spirit”. It is indeed tricky, especially for the Web Accessibility.

Often, software developers find themselves unfamiliar with the requirements model, given by Accessibility Guidelines (WCAG). Product owners find holistic accessibility concept conflicting with feature-driven delivery approach. Testers are getting lost, too - there are no clear expected results they are used to verify.

In this article I outline Web Accessibility Testing approach and it’s differences from functional requirements-based testing.

Holistic Accessibility Testing Strategy

First principle difference: Accessibility Testing is not confirmation-based. Rather than getting application’s functions and features to work, testers must identify how they cannot be used – because of accessibility barriers.

Second principle difference: Accessibility Testing is human-centric. While imposing technological solutions and constraints on users became quite common, this has to back off if conflicts with Accessibility. Good news, other users would only benefit, because Accessibility improves quality of Usability as well.

Third principle difference: Accessibility Testing is empiric and heuristic. Technically identical solutions might be accessibility enablers or barriers in different contexts. Testers must experiment and learn from the results. For the same reason, pre-written testing scripts will provide only poor quality of testing and cannot be relied upon.

In the context of these principles, I define four main components of Accessibility Testing Strategy.

Learn

- Learn about Web Accessibility

- What are accessibility barriers?

- What are the ways to remove them?

- Learn about users with special needs

- How they perceive and understand?

- How they operate?

- Learn about your products and services

- What value they provide for the customers?

- What problems they help to solve?

- Learn about user experiences

- With similar products

- Facing similar problems

- From your own Customer Support

Model

- Model risks

- What can go wrong due to accessibility barriers?

- What kinds of system failures may happen?

- What kinds of user mistakes may occur?

- Model operational objectives

- What a user needs to achieve?

- What the system expects from the user?

- Model interactions

- How a user provides input?

- How the system provides output?

- What the user needs to keep in mind?

Collaborate

- Collaborate with Accessibility Experts

- Learn from the examples

- Bring your findings

- Collaborate with Designers

- Experiment with their models

- Provide earliest possible feedback

- Collaborate with Developers

- Help them experiment with the implementation, and learn together

- Provide earliest possible feedback

- Collaborate with Customer Support

- Help them investigate the issues

- Learn from the customer feedback

- Collaborate with Users

- There are strong online communities - read, ask, and learn

- Listen to their needs and feedback on the similar products

Practice

- Practice your testing models and learn from it

- Build testing models while exploring the application

- Try different things as you learn about the implementation in the context

- Practice together with designers and developers

- Help each other to work out accessibility patterns in the product

- Build credibility of your testing

- Practice sharpening of your testing skills

- Develop critical thinking and systems thinking

- Study testing heuristics

- Create your own heuristics and methods

- Practice testing with tools and technologies

- Try accessibility design tools

- Try working with assistive technologies and turn them into testing tools

Afterword

The strategy I described might appear too different from scripted test case based testing (the one proclaimed “dead” by Google), and testers used to this kind only may struggle if asked to do Accessibility Testing.

But this strategy is well aligned with modern school of testing – context-driven – and the methodology of Rapid Testing. You can rely on its practitioners for taking good care of Accessibility Testing.

2 responses to "Web Accessibility Testing: Strategy and Mindmap"

Thanks - this makes a useful “framework” on how to approach such task,

Now the problem is to search and map all the common desired test issues :-)

[Author's reply:

See this Practice node that links to everything?

*This* is how you search and find common and uncommon, expected and unexpected product issues.]

@halperinko - Kobi Halperin

After more than 20 years of testing (enough practice?) still I find many new ideas almost daily.

[Author's comment: how dramatically the industry has changed since then?]

While its not directly related to this post - but to the vast amount of other Mind Maps & Checklists in your blog,

I am still wondering if we testers couldn’t help each other more, by sharing a common Crowed Intelligence DB of test ideas.

[Author's comment: but it is already publicly shared, indexed, and searchable. Centralizing is not necessary.]

I already have a SaaS tool ready for this - now we just need to start recording data :-) .

I see no reason in rewriting same tests/checks over and over in each company - while there is so much similarity in a huge portion of tests we do for 1st release of each product.

Text fields are text fields, date field are date fields….

Test Ideas I have used can help others and vice verse.

[Author's comment. I see your point. But it’s artifact-centric. While really it’s people and skills that make the difference.]

@halperinko - Kobi Halperin